RIF

This album includes jams from the new Orphic-DJ app that brings the Pulsar Generative Sequencer to Android and IOS. Paired with the DJ Panel, the Leslie, and a Timer, music of any style can evolve according to the user’s input and taste. Built as a standalone app using a separate audio graph, it shares plugins and panels with Orphic-FM.

New Drop effects in the DJ Panel provide Coachella-inspired sounds and graphics. Arrangements are constructed in Kotlin with a data hierarchy allowing sounds to be assigned to tracks, which are assembled into band mates who perform based on the characteristics of the song, individual, and user input to produce flowing and evolving musiç.

In this app, everything is left to chance, and Markov chains are used to define probabilities that generate sounds and compositions. A lot can go wrong with this setup, but the goal is to provide space and room for creativity, while defining guidelines and constraints to manage the overall flow and create something that is compelling.

One Shot

Extremely cool drop sound embellishment synced flawlessly with turntable spin physics provides a fun, musical experience on all platforms.

Coachella-inspired DJ Drop features implemented in a day by 4.7.

Bell Tolls

Comprehensive song arrangement structure defined in PulsarVibe.kt. This track also highlights the power of the four knobs — Energy, Complexity, Mood, and Space — for shaping unique, compelling sounds from the Pulsar engine.

Never send to know for whom the bell tolls; it tolls for thee- John Donne (1624)

Ant Hill

Nice demonstration of using the built-in timer to control the arrangement and auto fade out at the end.

All in all, we are all just ants on the hill.

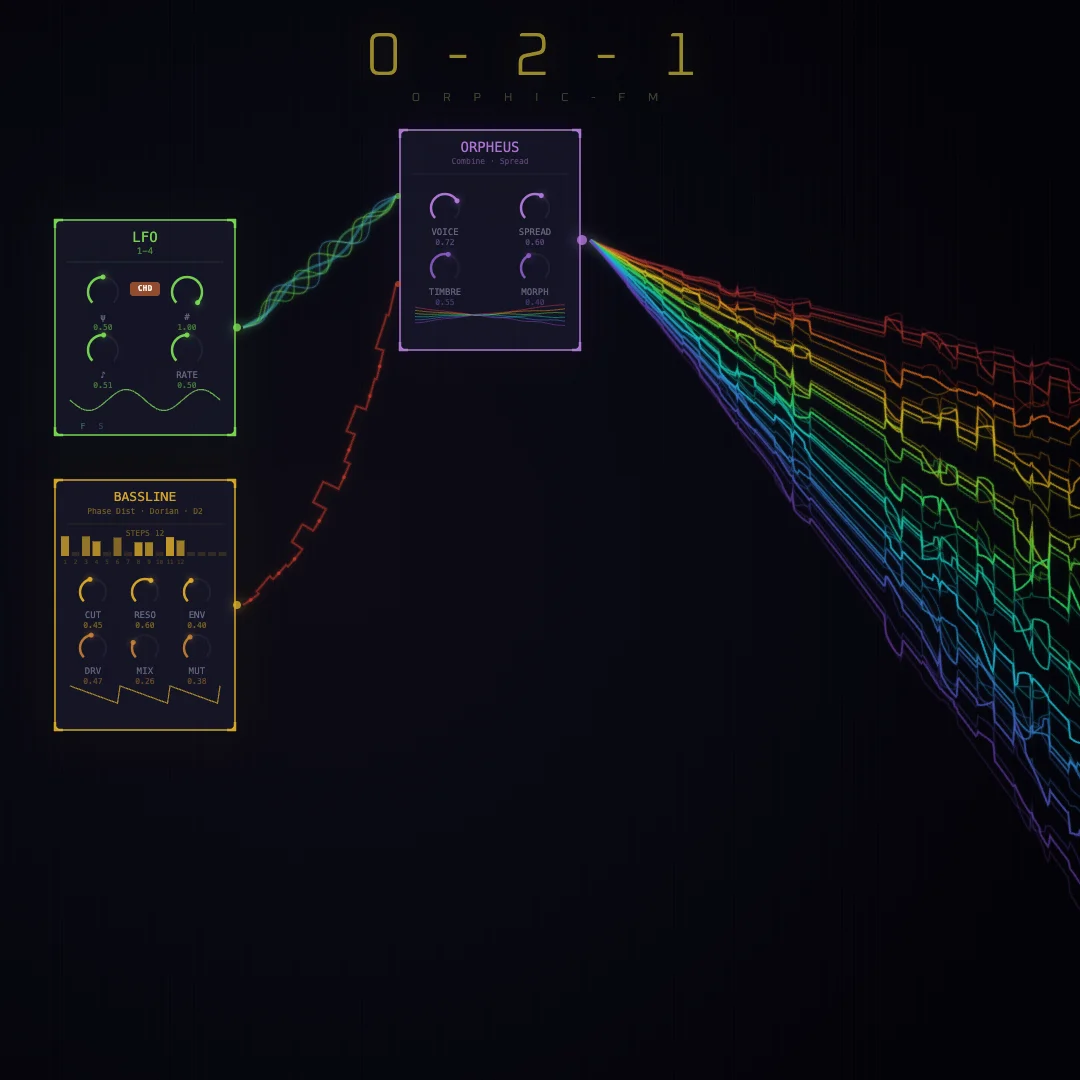

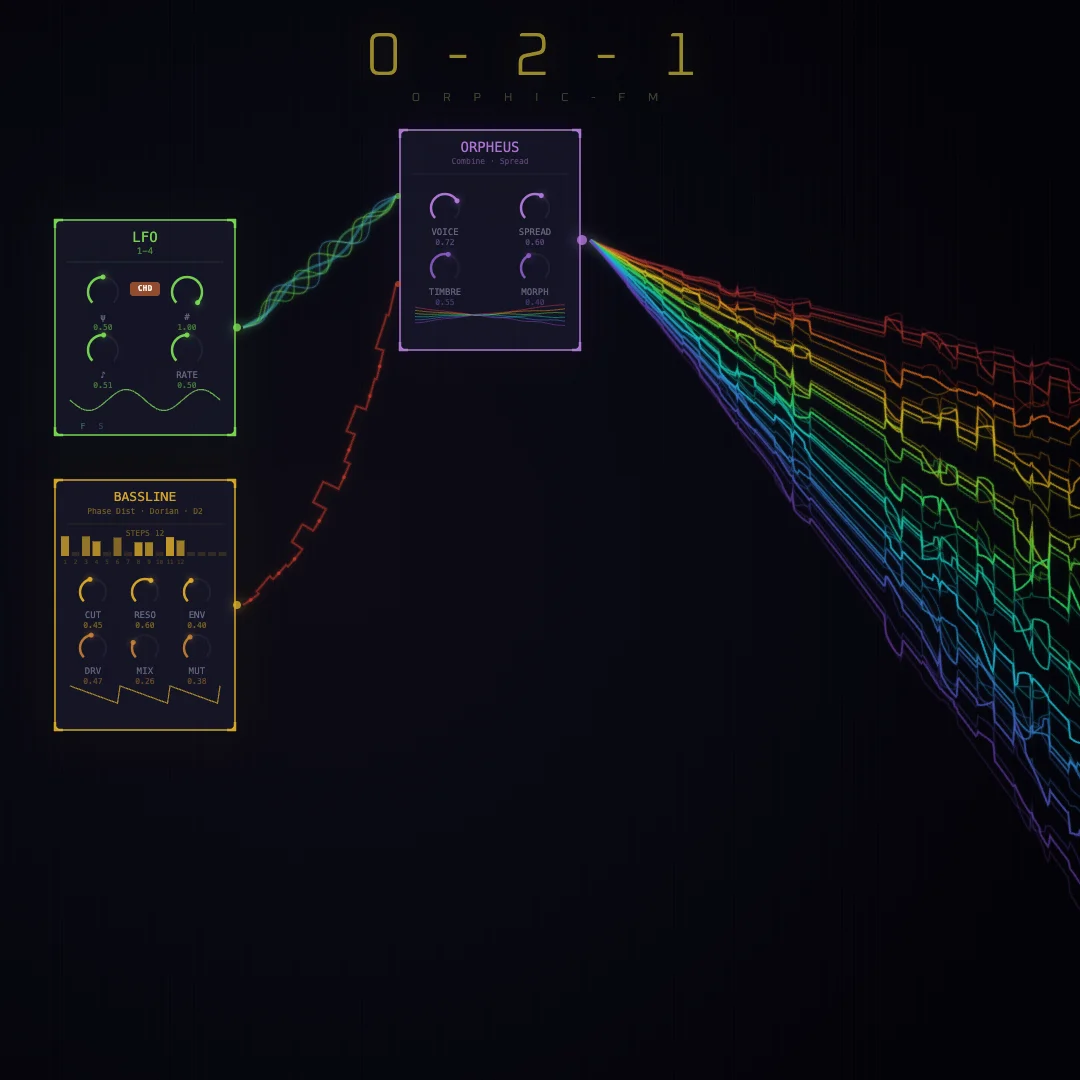

0-2-1

0-2-1 marks the 1.0 release of Orphic-FM. The big architectural shift: a C++ thin audio bus that wraps the Mutable Instruments OSS DSP code directly, replacing the previous Kotlin-only signal path. This unlocks native performance on all platforms (Android, iOS, Desktop) and makes it trivial for Claude to wire up new instruments against the MI engines.

Dog House

Dog House is built on the Pulsar engine’s transformation from a beat machine into a generative song arrangement engine. At its core, Markov chord progressions drive the harmonic movement — weighted probability matrices determine which chord follows the next, so no two plays through the same section sound identical. The arrangement is a 6-section graph (intro → verse → chorus → solo → breakdown → outro) where transitions between sections are also probabilistic, creating songs that follow a familiar blues arc but never repeat exactly.

Four virtual band members — Drummer, Bassist, Keys, and FX — interact through personality traits (loudness, creativity, swing, drag) and handoff/pull-in matrices that govern who plays lead and who locks in behind them. Each member runs 32-step patterns with per-track bar strategies (Mutate, Fill, Call & Response) that evolve the patterns over time. In the solo section, Jam mode kicks in — band members lock into combos and riff on a shared lick, mutating it with each pass to create the spontaneous call-and-response feel of a live session. Dedicated Pulsar delay and reverb effect units (independent of the main voice chain) add spatial depth, while per-track mod LFOs and hold-step logic create evolving textures and drones beneath the arrangement.

This track is also the debut of the standalone DJ App, which packages Orphic modules (Pulsar, DJ turntable, Leslie, and Distortion) into a mobile-friendly UX with full Media Session integration across Android, iOS, and macOS — complete with a foreground service to keep the jam alive when backgrounded.

Phrygian jam session using DJ to add some Coachella inspired texture.

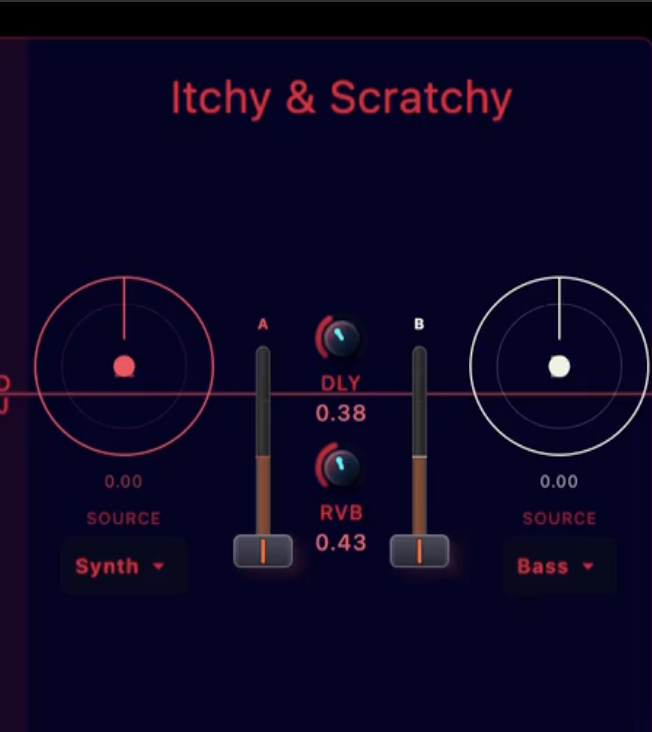

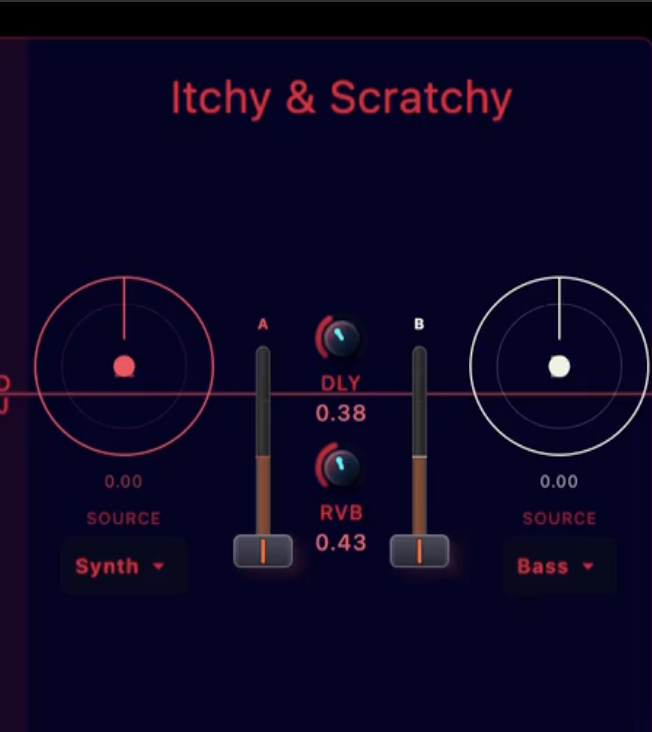

Itchy Scratchy

Itchy Scratchy puts the new DJ turntable front and center. The C++ DSP layer captures audio into a circular buffer and plays it back with cubic Hermite interpolation, giving scratches that warm, vinyl-like pitch response. Each deck can tap any source — Synth, Drums, Bass, or Master — and a constant-power crossfader blends between them without volume dips at center. On the Kotlin side, an MVI ViewModel drives a 60Hz physics simulation for platter momentum, while the Compose UI renders radial waveform platters and a vertical crossfader in the Cleveland Guardians palette.

New dual turntable module with scratch physics and crossfade bring DJ-style performance to the synth engine.

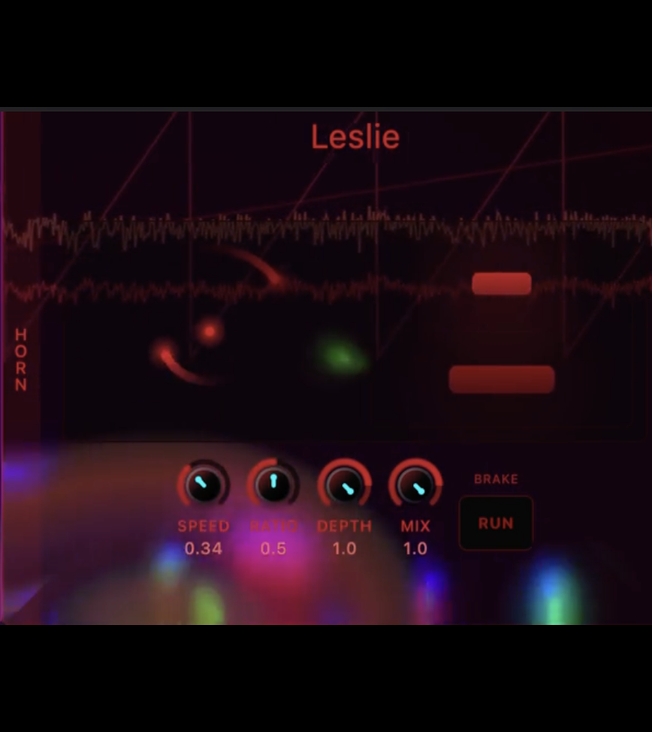

Lazy Susan

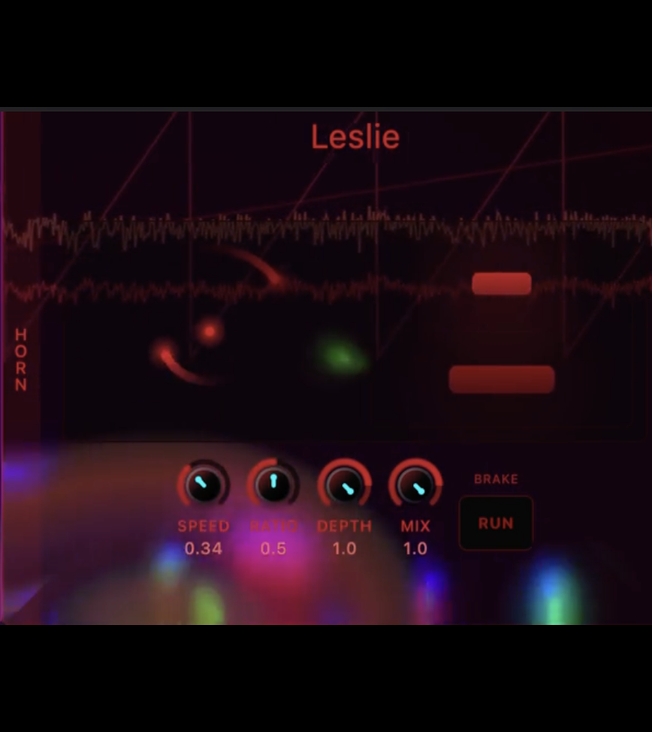

Lazy Susan showcases the new Horn (Leslie rotary speaker) DSP effect. The C++ engine splits the input through a Linkwitz-Riley crossover into separate horn and drum rotor channels, each spinning with independent inertia to model the real-world lag of heavy rotating elements. Stereo amplitude modulation recreates the Doppler-like swirl that defines the Leslie sound. On the Kotlin side, a physics-based animation drives the rotor visuals in the HornPanel, with tactile controls for Speed, Ratio, Depth, Amount, Mix, and Brake. Rotor phase and audio peaks feed back into the engine’s visualization ring buffers, so you can watch the rotors spin in sync with what you hear.

The Leslie Horn Panel adds chorus you can feel with your ears. Headphones on for this one.

Insight

Insight showcases the new portamento and legato behavior in the bass unit. A three-tier gate system now classifies each step: rest (≤0.3), slide (0.3–0.7), or normal trigger (>0.7). During slide steps, pitch smoothing (portamento) glides between notes with the glide time controlled by the envelope parameter. The envelope generator was updated to hold a sustain floor while the gate stays open, enabling smooth legato transitions without retriggering. The trigger logic skips retriggering entirely on slide steps, allowing the signal to flow continuously — the bass line breathes instead of stuttering between notes.

Sliding into the groove. Portamento and legato bring the bass to life with smooth pitch transitions and sustained expression.

WYSIWYG

WYSIWYG is the first track produced entirely on the new C++ audio bus — the result of a massive engine migration that removed JSyn and all Kotlin DSP infrastructure, deleting ~28,000 lines of code across 214 files. Every plugin was stripped down to a pure state container that forwards parameters to C++ via NativeDspBridge, unifying Desktop (JNI + miniaudio), Android (Oboe), and WASM (Emscripten Worker) under a single native engine. The hard-clipped master output was replaced with tanh() soft saturation, letting the Bassline funk and PolyLFO orchestra stack without digital distortion. The signal visualization reads directly from the C++ peak monitor flow, giving a true picture of what the sound actually looks like — what you see is what you get.

Seeing is believing. A Bassline funk combined with a PolyLFO orchestra produces sonic bliss.

Crossing the Chasm

Crossing the Chasm was feature-driven – still Kotlin, but with a new focus on front-end architecture and shipping as many features as possible. Self-registering UI panels, Panel Sets, the full Plaits engine suite, Dattorro plate reverb, speech synthesis, the Marbles-based Flux module, MediaPipe hand tracking, and ASL Maestro Mode all landed during this album. The codebase went from prototype to something resembling a real product.

The Balch Hotel

Written at the Balch Hotel in Dufur, Oregon with Mt. Hood visible in the background. The day before (February 27th), the Mt. Hood lava visualization was added to the synth and rendering performance was optimized. On recording day, control event handling was unified via setPluginControl. The cozy and charming 1907 hotel room, watching the sunset behind Mt. Hood in the window with my fabulous wife Benedicte, was captured perfectly in this visualization and song.

This was written in Dufur, OR at the fabulous Balch Hotel with Mt. Hood in the background.

Goodnight Brasi

This track showcases 4 new oscillators and the Bender toy on Desktop. The song was inspired by the big Block news that day, which left the community reeling: February 26th. A Aquarium visualization was added to the synth along with enhanced AI agent integration. The aquarium for some reason reminded of The GodFather. I experimented with an AR but so far that is not working as expected. The gesture control is still a huge WIP as it easily get confused on M,S and H.

This song features 4 new oscillators and a new Bender toy on Desktop. Orpheus sleeps above the fishes.

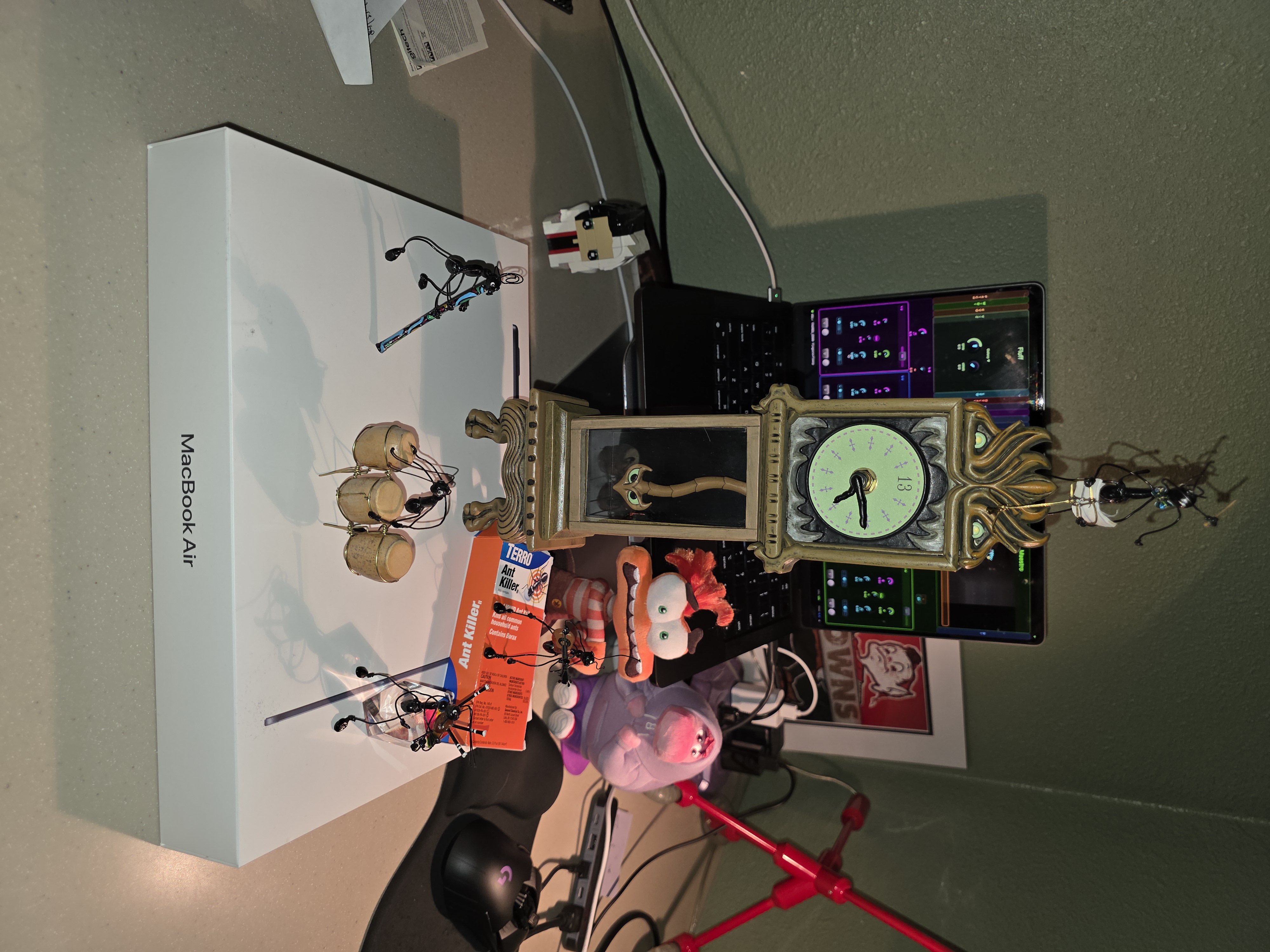

Handwavey

The first track performed using Maestro Mode – conducting the synth with hand gestures via MediaPipe. Camera-based hand tracking was added on February 21st, along with CPU-saving inactive DSP plugin disabling. On recording day (Feb 22nd), Maestro Mode was reworked to use individual voice gating with Thumbs-Up hold control, ASL gesture control routing was made context-dependent, and state snapshotting was centralized for REPL and gestures. The core:foundation module had also been decomposed into specialized modules with a JVM 21 upgrade just two days prior. The aspect ratio (1006/1080) captures the portrait-mode camera view of the hand tracking in action.

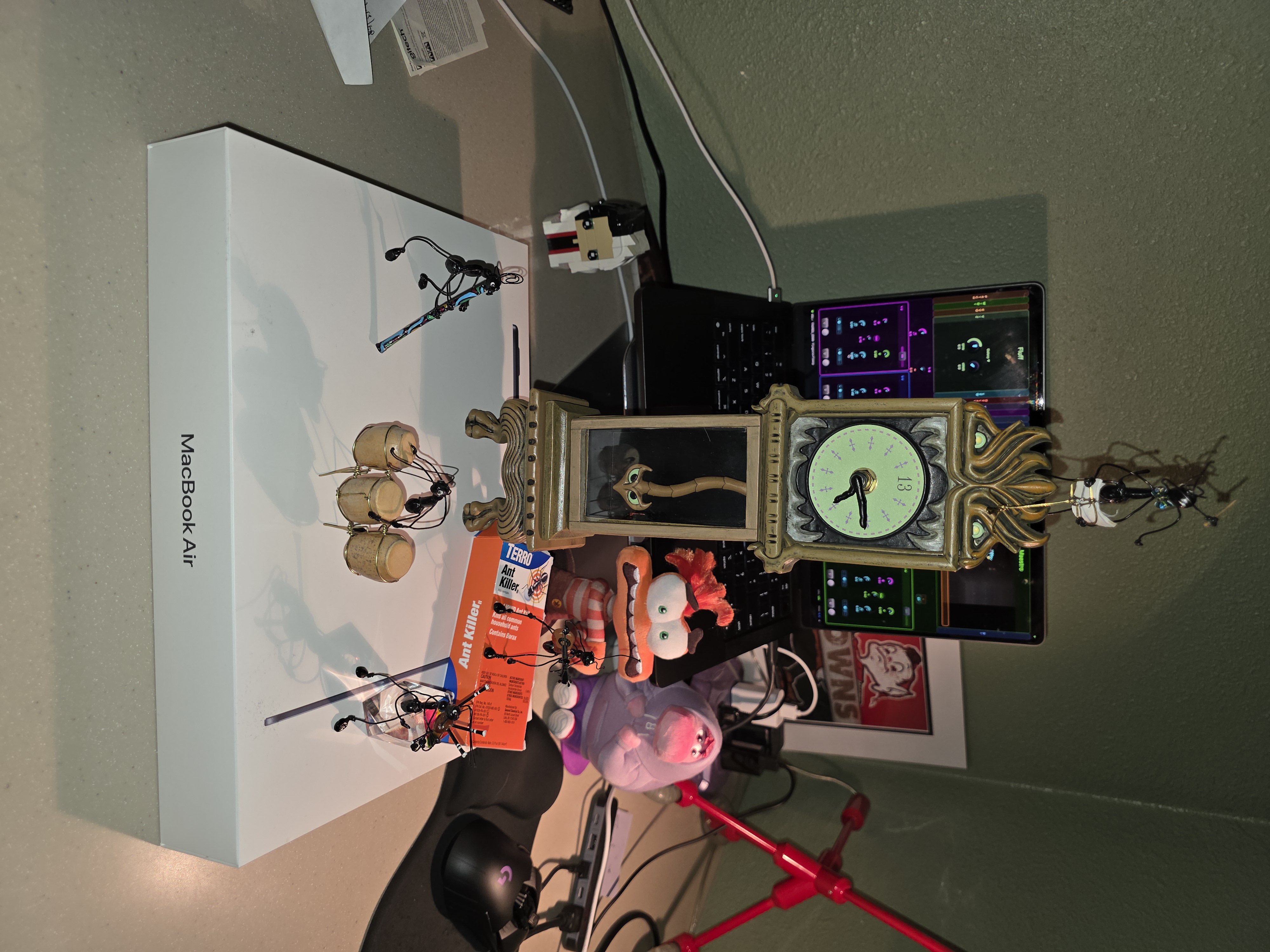

MediaPipe + ML + ASL = Maestro Mode. In this master-POS, Orpheus is accompanied by the Ant-Band and conducted for the first time using the new ASL Maestro Feature. This song is sponsored by the Terro.

Piece of Ice Cream Cake

A Valentine’s Day session with TTS and the brand-new Flux module. On February 13th, the full Marbles engine was implemented in Flux, self-registering UI panels were introduced (decoupling UI from layout), the Speech Panel got an animated multi-phase readout with avatar animation, and Panel Sets were added for UI layout management. On recording day (Feb 14th), the codebase saw keyboard input decoupled from ViewModels, SharingStarted strategy made configurable, and feature documentation centralized into SynthFeature. The front-end architecture was maturing fast.

Orpheus was feeling a little Pink on Valentines day and decided to chill with a piece of Ice Cream Cake.

6-7

Orpheus speaks for the first time. The Speech Synthesis Engine and TTS Player were committed on February 8th – the same day this track was recorded. The day before saw a massive Plaits engine integration: five new physical modeling and granular synth engines, complete gain staging, percussive mode, audio-rate modulation, and the Dattorro plate reverb (ported from Mutable Instruments Rings). The reverb and delay atmosphere in this track are the brand-new effects getting their first real workout. Also notable: audio thread heap allocations were eliminated that same day, a critical performance fix.

Orpheus utters his first words, using his new Plaits-inspired speech synthesis module. A healthy dose of reverb with a touch of delay and lfo provide the atmosphere for this new timeless classic fad hit.

Bootstrap

Bootstrap was cut on a raw prototype – all Kotlin, all the time. The synth ran on JSyn for desktop audio, had 8 oscillators, a Rings-inspired resonator, an 808-style drum machine, and a brand-new AI agent that could barely hold a tune. CPU was slow, the architecture was monolithic, and every recording session doubled as a stress test. By the end of this album the Ports DSL, plugin system, and multi-model AI support had all taken shape.

Encapsulation

This track was recorded in the middle of the biggest architecture overhaul of the Bootstrap era. The Ports DSL – a type-safe nested DSL for defining DSP port connections – was taking shape, along with PortRegistry for unified plugin parameter management. The Duo LFO got speed multipliers, the ViewModel layer was overhauled with SynthController controlFlow, and drum synthesis was abstracted using Plaits engines. The resonator and LFO you hear in this track were literally being refactored as it was being recorded.

Melodic buildup to sweet droney howl aided by the clang of the resonator while being guided by a tasteful amount of feedback and lfo.

Welcome to ML

A fully AI-generated drone – Gemini Flash 3.0 with no human intervention. On January 25th, the Looper and Resonator panels were integrated into the Desktop UI, Warps routing was changed to use pre/post-master effects sends, the evolution prompt system got its first-prompt fix (ensuring the AI started generating immediately), and LiveCode was encapsulated into its own panel. The grinding, metallic sound comes from the AI pushing the Warps meta-modulator to extremes the routing wasn’t originally designed for.

Grinding, Metallic, Bending drone generated entirely by Orpheus using Gemini Flash 3.0

AGP 9.0

A celebration song for the Android Gradle Plugin 9.0 migration – hence the name and the @Preview annotation in the description. On January 24th, the Gradle wrapper was upgraded to 9.3.0, DSP plugin access was centralized, and the Matrix meta-modulator (based on Mutable Instruments Warps) got Duo LFO modulation. The live debug log view also landed that day, which was immediately useful for monitoring the CPU-hungry desktop build.

@Preview: Celebration song for the Gradle Plugin migration

Reprise Surprise

One of the earliest AI-composed tracks. The day before, AI feature code was consolidated into commonMain (enabling Kotlin Multiplatform AI support) and the Sonnet model was added alongside centralized model definitions. Gemini Flash 3.0 drove this composition, but the heavy drum backing exposed the limits of the AI’s mix sensibility at this stage.

Gemini Flash 3.0 with a (too) heavy drum backing

Daisy 9000

The first-ever recording on the Orphic-FM synth. On January 11th alone there were 16 commits – AI agent hardening, multi-provider support (OpenAI and Anthropic Haiku3), MIDI learn, generic control routing for Tidal and AI agents, and the drum machine mix control. The 808-style drum synth and physical modeling resonator (based on Mutable Instruments Rings) had landed just the day before. Everything was raw and untested – this recording was as much a shakedown cruise as a composition.

First track recorded on the Orphic-FM Synth. This manual composition was inspired by HAL 9000 as means to show AI how to express itself through music.